Social media platform X has announced that creators who post AI-generated war videos without clear labeling will be suspended from the platform's revenue sharing program for 90 days. This measure aims to address the growing risk of misinformation, especially in the context of the proliferation of content from conflict zones.

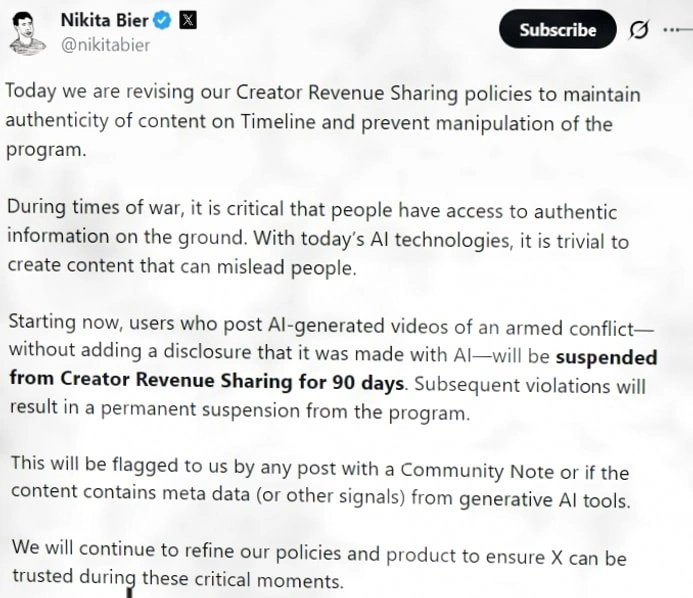

X co-founder Nikita Bier stated, "During times of war, it is crucial for the public to have access to accurate and reliable information from the field. Today's AI technology is capable of generating highly realistic fake content that can easily mislead the public." This policy strengthens content transparency through economic means, directly linking the disclosure obligation of AI-generated content to creators' eligibility for revenue.

Unlike traditional review methods that rely solely on labels or content takedowns, the new rule for the first time uses the platform's monetization mechanism as an enforcement tool. When the system identifies undeclared war-related AI videos through Community Notes crowdsourced labeling, metadata analysis, or AI-generated trace identification, the penalty mechanism will be automatically triggered. Accounts that repeatedly violate the rules may even be permanently disqualified from revenue sharing.

It is important to note that this policy only targets video content depicting armed conflict and does not comprehensively prohibit the dissemination of AI-generated content on the platform. The platform emphasizes that its goal is to curb the use of AI to create false war footage to incite emotions or manipulate public opinion, rather than to restrict creative freedom.

The introduction of this policy comes at a time when the ongoing conflict in the Middle East has sparked heated discussions on global social media. False videos are spreading rapidly across various platforms, exacerbating public confusion and prompting platforms to take stricter governance measures.